Deadline: April 12, 2026

Applications are invited for the CBAI Summer Research Fellowship in AI Safety 2026. The Cambridge Boston Alignment Initiative Summer Research Fellowship in AI Safety is an intensive, fully-funded, nine-week research program hosted in Cambridge, Massachusetts, from June 8 to August 10.

It is designed to support talented researchers aiming to advance their careers in AI safety, covering interpretability, multi-agent safety, formal verification, risk management frameworks, and other technical and governance domains. Fellows work closely with established mentors and in-house research managers, participate in weekly workshops and speaker events, and gain invaluable research experience and networking opportunities within the vibrant AI safety community at institutions like Harvard, MIT, Northeastern, Boston, and leading AI safety organizations.

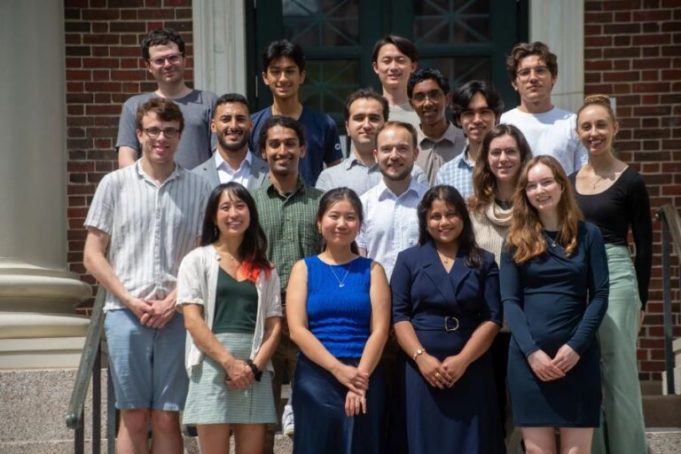

The inaugural fellowship cohort members have joined Goodfire and Redwood, established their own research group, been accepted into NeurIPS and ICLR, and shared their reports with policymakers in DC.

Research Tracks

- Technical AI Safety: Research focused on reducing catastrophic risks from advanced AI, including alignment strategies, interpretability, robustness, formal verification, multi-agent safety, and scalable oversight.

- AI Governance: Research and analysis aimed at shaping how AI is developed, deployed, and governed, including policy frameworks, regulatory approaches, international coordination, and institutional mechanisms for managing risks from powerful AI systems.

- Technical Governance: Research at the intersection of technical AI safety and policy, including compute governance, model evaluations, standards development, and institutional mechanisms for ensuring advanced AI systems are safe and beneficial.

- Economics of Transformative AI: Research on the economic causes and consequences of advanced AI development, spanning empirical and modeling approaches to growth, productivity, and labor market dynamics, as well as policy questions around industrial strategy, market concentration, and the political economy of AI governance.

Benefits

- Mentorship (1–2 hours/week) from researchers at institutions such as MIT, Harvard, Northeastern, Boston, the Institute for Progress, AVERI, RAND, and others.

- Dedicated in-house research managers with research experience in interpretability, alignment science, and AI governance & policy domains.

- Stipend: $10,000 for nine weeks.

- Meals and refreshments: Free lunches and dinners are provided every weekday, along with coffee, beverages, and snacks in the office.

- Accommodation: They will provide housing for the entire program for those joining us outside of Cambridge.

- Office space: 24/7 access to the offices in Harvard Square, conveniently located two minutes from the Harvard Square metro station and five minutes from Harvard Yard.

- Networking opportunities: Extensive collaboration with the Bau Lab at Northeastern, Tegmark Lab at MIT, Science of Natural and Artificial Intelligence Group at Harvard, FutureTech at MIT, Algorithmic Alignment Group at MIT, and other researchers in the area from universities and labs working on interpretability, formal verification, and AI governance & policy. They will organize social activities, networking events, and speaker events & workshops with frontier researchers to help you explore different research directions and develop a research taste.

- Research Support: They will provide compute support for up to $10,000 to each fellow in the form of API credits & on-demand GPUs for your experiments and research, and conference/workshop support to help you present your work done through the CBAI fellowship.

- Extension Fellowship: For those interested in continuing their research, they offer an extension program with funding up to 6 months.

Eligibility

- They welcome applications from anyone deeply committed to advancing the safety and responsible governance of artificial intelligence. Ideal candidates include:

- Undergraduate, Master’s, and PhD students — and Postdocs — looking to explore or deepen their engagement in AI safety research.

- Recent graduates who are aiming to transition into technical or governance AI safety research.

- Individuals who are passionate about addressing the risks associated with advanced AI systems.

- They accept OPT & CPT if you are an international student in the US. But they are unable to sponsor visas for this program.

- Participants must be 18 years or older to apply for this fellowship.

Application

The application process consists of four steps:

- General application form

- 15-minute interview with CBAI

- Mentor-specific question or test task (if applicable) & code screening (technical streams)

- An interview with the mentor

For more information, visit CBAI Summer Research Fellowship in AI Safety.